Osprey event engine addresses the challenge of real-time event and rule processing under high loads. Let’s explore how the architecture is structured and where its compromises lie.

The problem manifests when the stream of events becomes continuous, and the response must remain synchronous. In platform-level systems with hundreds of millions of actions per day, classic pipelines begin to introduce latency or lose consistency in decisions. This is particularly noticeable in threat detection scenarios, where even a slight delay can change the outcome. Additional pressure arises from the need to dynamically update rules without stopping the system. At this point, the architecture encounters a conflict: processing speed versus logic flexibility.

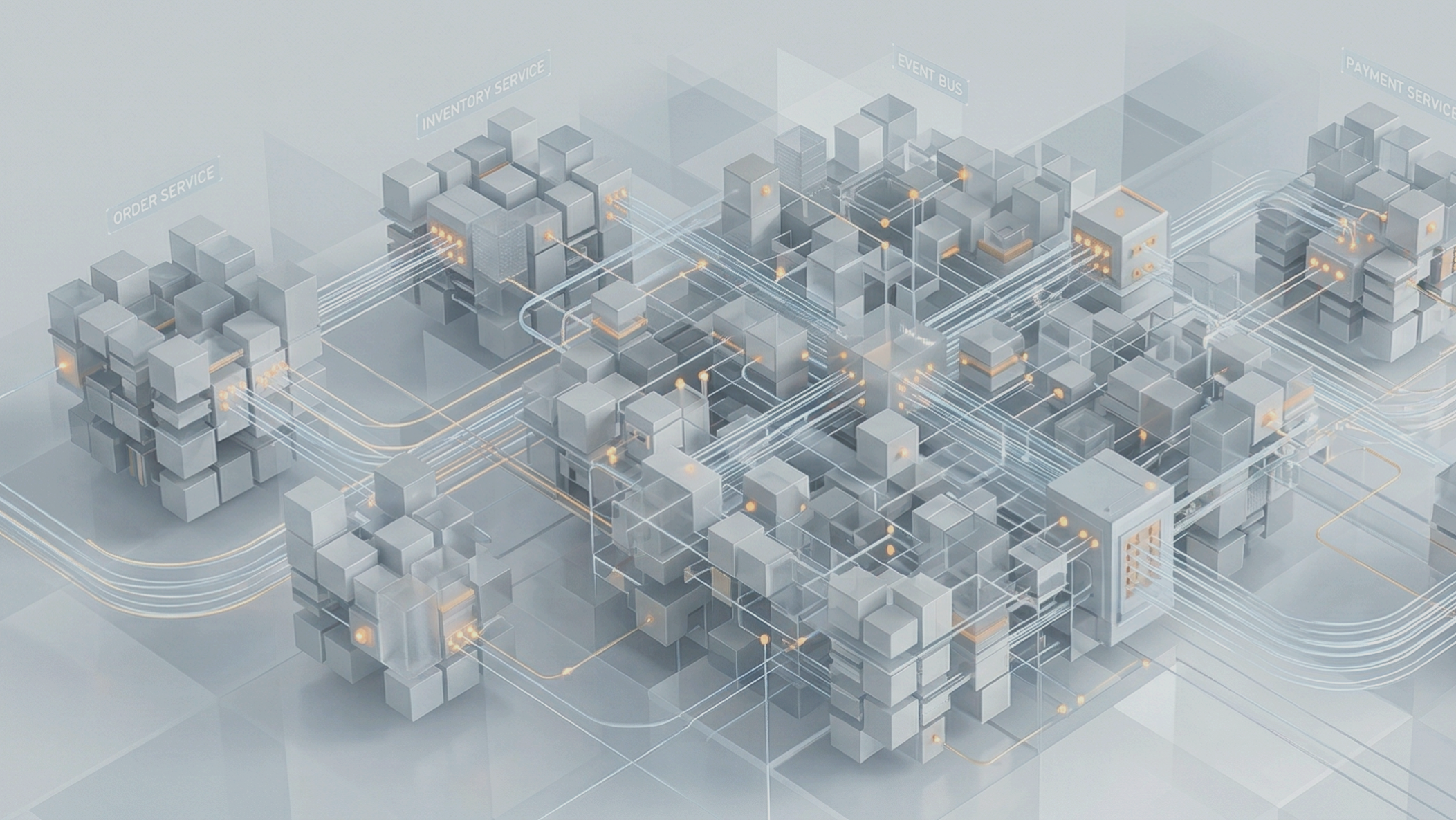

Osprey adopts a pragmatic compromise—separating the system into a data plane and a control plane through a polyglot approach. A coordinator written in Rust manages event streams and concurrency, while Python workers execute the business logic of the rules. This approach reflects a common industry pattern: Rust provides predictable performance and memory control, while Python offers development speed and extensibility. The trade-off is clear: the operational model and observability become more complex, but throughput and flexibility improve. In the context of event-driven architecture, this allows for scaling processing without a rigid attachment to logic.

The implementation revolves around several key elements. Events arrive in JSON format as Actions and pass through a coordinator that manages asynchronous streams and prioritizes gRPC requests for stable latency. Rule processing occurs on stateless Python workers. To avoid overhead from interpretation, rules written in SML (a DSL with Python syntax) are compiled into an Abstract Syntax Tree at startup. This shifts the computational load to the initialization phase and reduces the cost of processing each event.

System dynamics are ensured through ETCD: rules are propagated to workers without redeploying. This is critical for systems where logic changes must be applied immediately. The stateless design and containerization via Docker allow for horizontal scaling of processing during peak loads. Interaction with the external world is built through output sinks implemented using Pluggy, which replaces rigid internal dependencies with extensible integration points.

Particular attention is warranted for the extension model through User Defined Functions. These are written in Python and effectively form the system’s standard library. This provides access to external APIs and ML models but simultaneously introduces the risk of uncontrolled dependencies and increased latency. Here, the architecture bets on disciplined usage rather than strict limitations.

From a data perspective, the system operates on Entities—objects for which state is maintained and labels are applied. This allows for the construction of contextual rules but requires careful state management to avoid breaking consistency during scaling. The original description does not disclose details of state storage, leaving the question of its resilience during failures open.

Results indicate that the system can process up to 2.3 million rules per second under loads of hundreds of millions of events per day. However, precise metrics on latency and errors are not disclosed. Architecturally, the system demonstrates resilience through a separation of responsibilities: Rust stabilizes the flow, while Python accelerates the evolution of logic. Kafka and Druid are used for routing and analytics, closing the loop from processing to observability.

In conclusion, Osprey is not an attempt to simplify event processing but a recognition of its complexity. The architecture emphasizes scalability and dynamism, accepting the rise in operational costs as an inevitable compromise. This approach is already becoming standard for high-load systems, where the data stream is more critical than execution simplicity.